Menu

Anaconda makes it easy to install TensorFlow, enabling your data science, machine learning, and artificial intelligence workflows. This page shows how to install TensorFlow with the conda package manager included in Anaconda and Miniconda. TensorFlow with conda is supported on 64-bit Windows 7 or later. Conda create -name tfgpu tensorflow-gpu. Well is that it? This command will create an environment first named with ‘tfgpu’ and will install all the packages required by tensorflow-gpu including the cuda and cuDNN compatible verisons.

In this post, we describe how we installed TensorFlow with Anaconda on the 4-GPU workstation Hydra.

Anaconda Python

Anaconda is a freemium open source distribution of Python. Anaconda provides high performance computing with:

One should use Anaconda Python, rather than the stock Python that comes with your Linux distros, for any serious computation.

1) Download the latest Anaconda3 for Linux Installer (v5.0.1 as of this writing) and install it:

This will install Python 3.6. The default install location is

$HOME/anaconda3, so any user can install a private copy. I chose to install it at system location /opt/anaconda3 and thus made it available to all users.2) Update Anaconda 3:

3) Download the latest Anaconda2 for Linux Installer (v5.0.1 as of this writing) and install it:

This will install Python 2.7. The default install location is

$HOME/anaconda2, so any user can install a private copy. I chose to install it at system location /opt/anaconda2 and thus made it available to all users.4) Update Anaconda 2:

Note here we use module to facilitate the usage of multiple Python distributions on Hydra.

One crucial reason that Anaconda Python provides much higher performance than the stock Python is that it uses the highly optimized Intel MKL for some of most popular numerical/scientific Python libraries, including NumPy, SciPy & Scikit-Learn:

Incorrect way of installing TensorFlow with Anaconda

Before I show you the proper way of installing TensorFlow with Anaconda, I’d like to point out that there are a couple of deficiencies in the official TensorFlow documentation on Installing with Anaconda. Let’s follow the instructions step-by-step.

1) We’ve already downloaded and installed Anaconda.

2) Create a conda environment named

tensorflow to run Python 3.6 (as an unprivileged user):3) Activate the conda environment:

4) Install the latest TensorFlow release (1.4.0 as of this writing) inside the conda environment:

However, when I tried to import the tensorflow module, I got an error:

Apparently, the module was compiled against the wrong Python version! Admittedly, it might work to install TensorFlow this way with earlier Python versions, such as 2.7 and 3.5; but not with 3.6!

In the above output we note that numpy was also installed in the conda environment, as a dependency of TensorFlow. However, this numpy module is not built with MKL, but rather with OpenBLAS!

The OpenBLAS libraries are presumably located in

/usr/local/lib. However, that directory is empty!It’s not worth our time to investigate further. Let’s deactivate the conda environment:

then remove the conda environment:

Proper way of installing TensorFlow with Anaconda

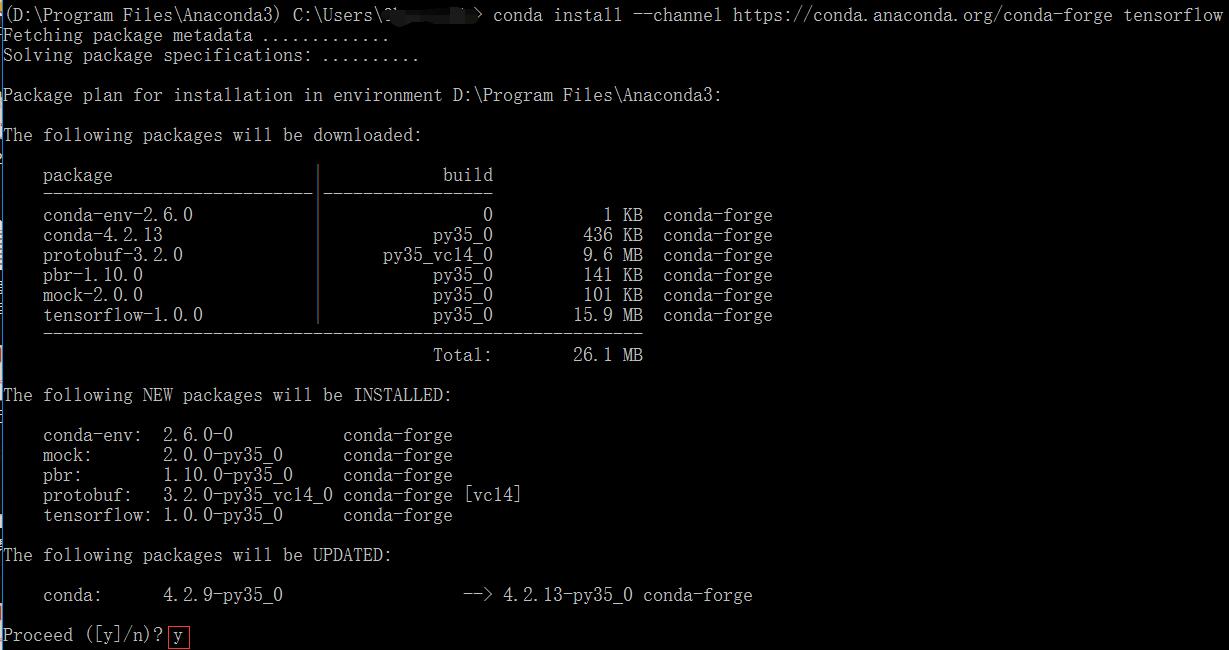

In fact, Conda provides TensorFlow packages in its default channel / repository! As of this writing, the latest TensorFlow version in the default channel is 1.3.0, which is slightly behind the latest official release of TensorFlow (1.4.0).

Installing TensorFlow is easy:

Note Conda installed its own copy of CUDA 8.0 & cuDNN 6.0 in Anaconda, so it doesn’t depend upon external CUDA & cuDNN libraries to function!

Similarly, we’ve also installed TensorFlow with Anaconda2:

Installing Keras with Anaconda

At this point, it should be no surprise that Keras is also included in the default conda channel; so installing Keras is also a breeze.

Install Keras with Anaconda3:

Install Keras with Anaconda2:

The Anaconda Distribution includes several packages that use the GPU as anaccelerator to increase performance, sometimes by a factor of five or more.These packages can dramatically improve machine learning and simulation usecases, especially deep learning. Read more about getting startedwith GPU computing in Anaconda.

While both AMD and NVIDIA are major vendors of GPUs, NVIDIA is currently themost common GPU vendor for machine learning and cloud computing. The informationon this page applies only to NVIDIA GPUs. As of August 27th, 2018, experimentalAMD GPU packages for Anaconda are in progress but not yet officially supported.

GPU compatibility¶

GPU acceleration requires the author of a project such as TensorFlow toimplement GPU-specific code paths for algorithms that can be executed on theGPU. A GPU-accelerated project will call out to NVIDIA-specific libraries forstandard algorithms or use the NVIDIA GPU compiler to compile custom GPU code.Only the algorithms specifically modified by the project author for GPU usagewill be accelerated, and the rest of the project will still run on the CPU.

For most packages, GPU support is either a compile-time or run-time choice,allowing a variant of the package to be available for CPU-only usage. When GPUsupport is a compile-time choice, Anaconda will typically need to build twoversions of the package, to allow the user to choose between the “regular”version of the project that runs on CPU only and the “GPU-enabled” version ofthe project that runs on GPU.

Due to the different ways that CUDA support is enabled by project authors, thereis no universal way to detect GPU support in a package. For many GPU-enabledpackages, there is a dependency on the

cudatoolkit package. Other packagessuch as Numba do not have a cudatoolkit dependency, because they can be usedwithout the GPU.Hardware requirements¶

NVIDIA released the CUDA API for GPU programming in 2006, and all new NVIDIAGPUs released since that date have been CUDA-capable regardless of market.Although any NVIDIA GPU released in the last 5 years will technically work withAnaconda, these are the best choices for machine learning and specifically modeltraining use cases:

- Tesla P100 or V100

- Titan Xp or V

- GeForce 1080 or 1080 Ti

- Various recent Quadro models

Deployed models do not always require a GPU. When a GPU is required for adeployed model, there are other Tesla GPU models that are more optimized forinference than training, such as the Tesla M4, M40, P4, and P40.

Cloud and on-premise data center deployments require Tesla cards, whereas theGeForce, Quadro, and Titan options are suitable for use in workstations.

Most users will have an Intel or AMD 64-bit CPU. We recommend having at leasttwo to four times more CPU memory than GPU memory, and at least 4 CPU cores tosupport data preparation before model training. There are a limited number ofAnaconda packages with GPU support for IBM POWER 8/9 systems as well.

Software requirements¶

The best performance and user experience for CUDA is on Linux systems.Windows is also supported. No Apple computers have been released with an NVIDIAGPU since 2014, so they generally lack the memory for machine learningapplications and only have support for Numba on the GPU.

Anaconda requires that the user has installed a recent NVIDIA driver that meetsthe version requirements in the table below. Anaconda does not require theinstallation of the CUDA SDK.

Ubuntu and some other Linux distributions ship with a third party open-sourcedriver for NVIDIA GPUs called Nouveau. CUDA requires replacing the Nouveaudriver with the official closed source NVIDIA driver.

All other CUDA libraries are supplied as conda packages.

GPU-enabled packages are built against a specific version of CUDA. Currentlysupported versions include CUDA 8, 9.0 and 9.2. The NVIDIA drivers are designedto be backward compatible to older CUDA versions, so a system with NVIDIA driverversion 384.81 can support CUDA 9.0 packages and earlier. As a result, if a useris not using the latest NVIDIA driver, they may need to manually pick aparticular CUDA version by selecting the version of the

cudatoolkit condapackage in their environment. To select a cudatoolkit version, add aselector such as cudatoolkit=8.0 to the version specification.Required NVIDIA driver versions, excerpted from the NVIDIA CUDA Toolkit ReleaseNotes:

| CUDA Version | Linux x86_64 Driver Version | Windows x86_64 Driver Version |

|---|---|---|

| CUDA 8.0 (8.0.61 GA2) | >= 375.26 | >= 376.51 |

| CUDA 9.0 (9.0.76) | >= 384.81 | >= 385.54 |

| CUDA 9.2 (9.2.88) | >= 396.26 | >= 397.44 |

| CUDA 9.2 (9.2.148 Update 1) | >= 396.37 | >= 398.26 |

Sometimes specific GPU hardware generations have a minimum CUDA version. As ofAugust 27th, 2018, the only relevant constraint is that the Tesla V100 and TitanV (using the “Volta” GPU architecture) require CUDA 9 or later.

Available packages¶

TensorFlow¶

TensorFlow is a general machine learning library, but most popular for deeplearning applications. There are three supported variants of the

tensorflowpackage in Anaconda, one of which is the NVIDIA GPU version. This is selected byinstalling the meta-package tensorflow-gpu:Other packages such as Keras depend on the generic

tensorflow package nameand will use whatever version of TensorFlow is installed. This makes it easy toswitch between variants in an environment.PyTorch¶

PyTorch is another machine learning library with a deep learning focus. PyTorchdetects GPU availability at run-time, so the user does not need to install adifferent package for GPU support.

Caffe¶

Caffe was one of the first popular deep learning libraries.

Chainer/CuPy (Linux only)¶

Chainer is a deep learning library that uses NumPy or CuPy for computations.

Chainer’s companion project CuPy is a GPU-accelerated clone of the NumPy APIthat can be used as a drop-in replacement for NumPy with a few changes to usercode. When CuPy is installed, Chainer is GPU-accelerated. CuPy can also be usedon its own for general array computation.

XGBoost¶

XGBoost is a machine learning library that implements gradient-boosted decisiontrees. Training several forms of trees is GPU-accelerated.

MXNet¶

MXNet is a machine learning library supported by various industry partners, mostnotably Amazon. Like TensorFlow, it comes in three variants, with the GPUvariant selected by the

mxnet-gpu meta-package.Numba¶

Numba is a general-purpose JIT compiler for Python functions. It provides a wayto implement custom GPU algorithms in purely Python syntax when the

cudatoolkit package is present.GPU support in Anaconda Enterprise¶

GPU-enabled conda packages can be used in AE 5 projects when the cluster hasresource profiles which include GPUs. For more details see the GPU supportsection of the AE 5 FAQ.